InquirySpace

Importance

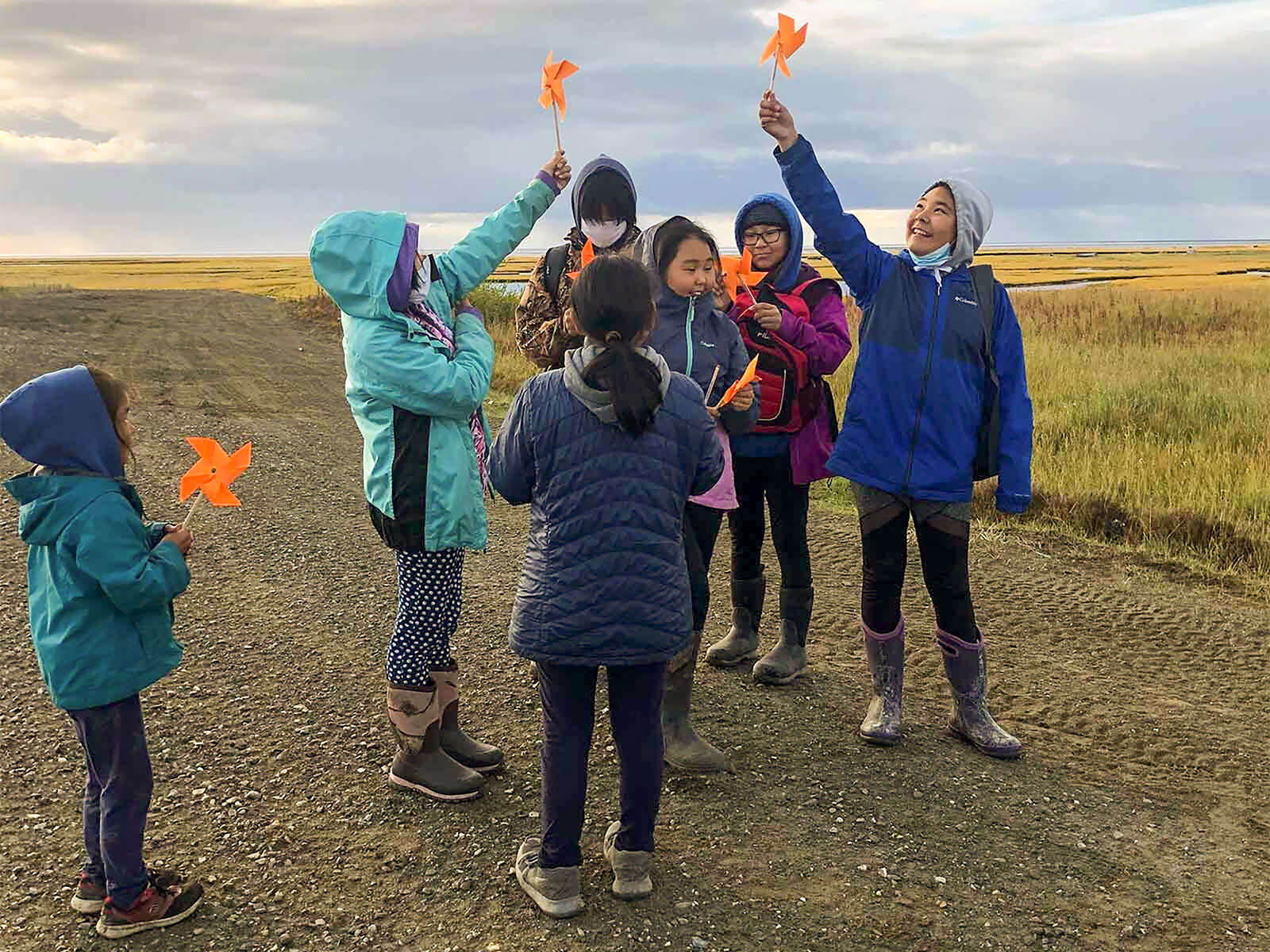

Students should learn science the way scientists do, using inquiry-based learning. But finding student projects that are feasible and interesting is difficult. InquirySpace gives students tools, guidance, and ideas that greatly expand the range and sophistication of meaningful open-ended science investigations.

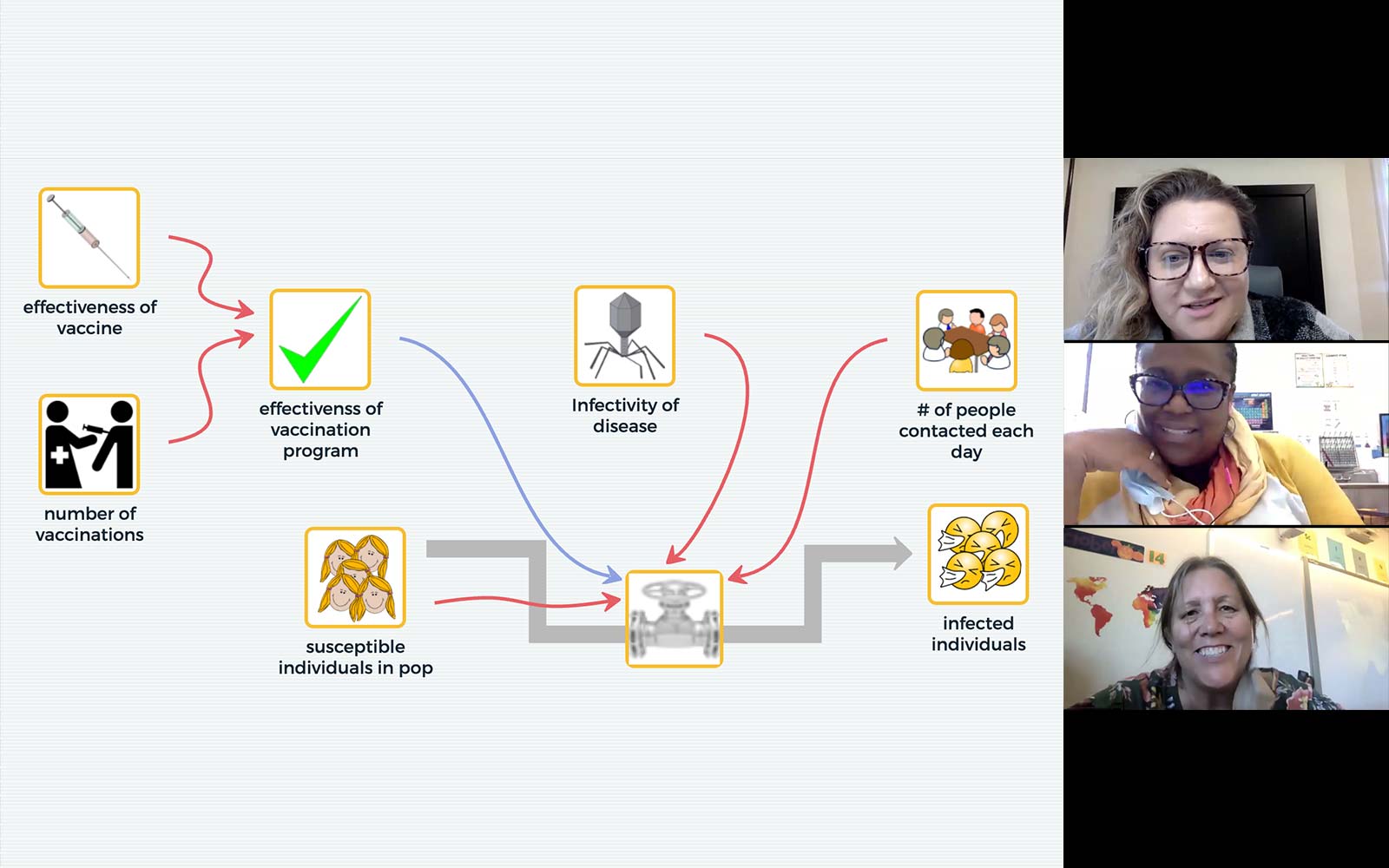

Every student should have the chance to experience the exciting practice of science. But far too often, students encounter only highly structured “cookbook” labs in their science classrooms. We’re combining a software environment that integrates probeware, video analysis tools, and data exploration capabilities with instructional guidance, and helping students move from fundamental data analysis and scaffolded experiments to open experiments of their own design.

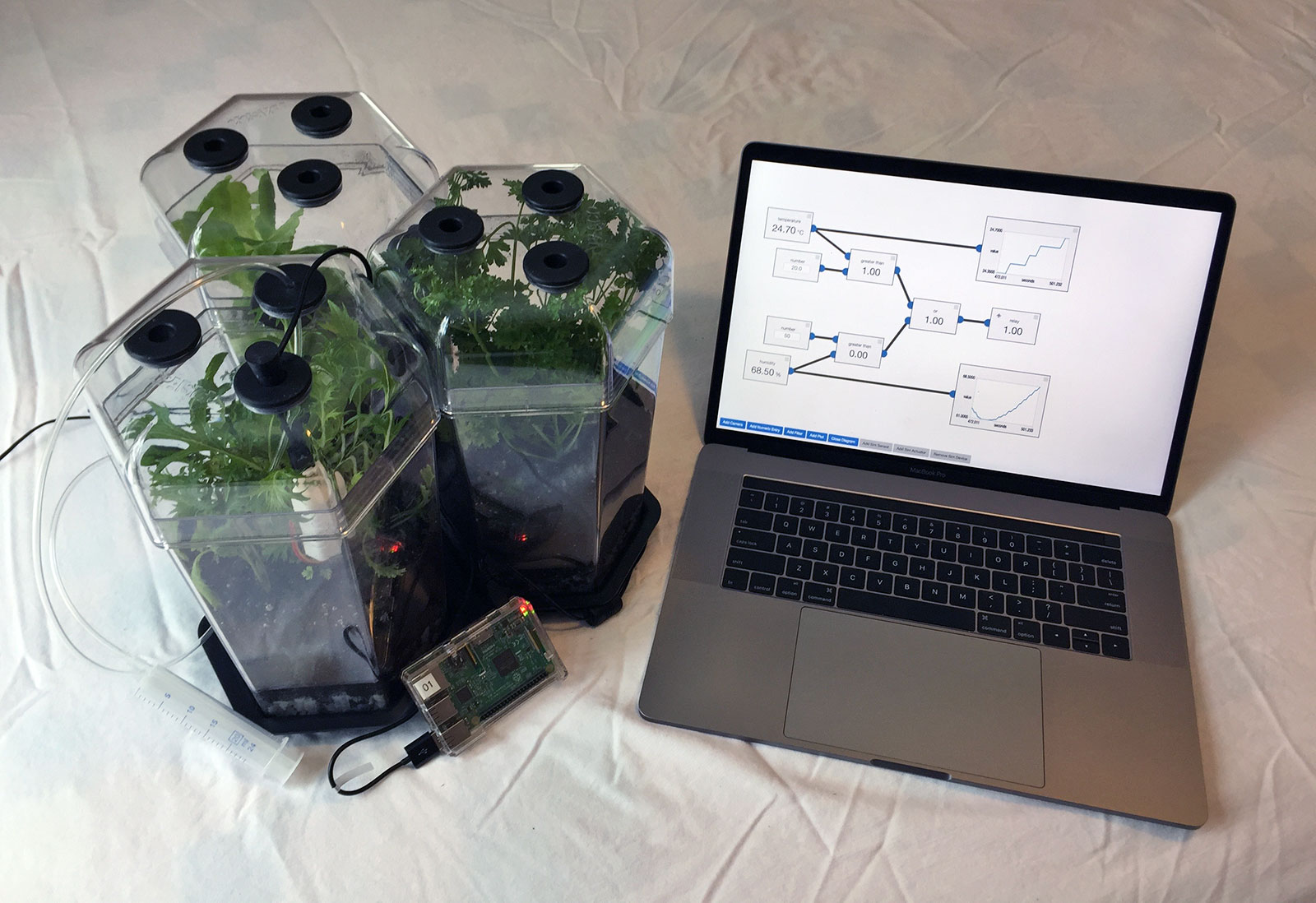

InquirySpace uses innovative technologies—the versatile modeling environments of NetLogo and the Molecular Workbench, real-time data collection from probes and sensors, and the powerful visual data exploration capabilities of our Common Online Data Analysis Platform (CODAP). These tools are integrated into a coherent, Web-based environment enabling rich, collaborative scientific inquiry.

Student materials are designed to help students experience open-ended investigations through a sequence of four steps with increasing degrees of student autonomy, culminating in an open-ended exploration of their own design. Physics activities are already available. New activities are being designed for biology and chemistry.

Research

InquirySpace has demonstrated that typical students can learn to use an integrated set of computer-based tools to undertake sophisticated, open-ended investigations similar to the approach and thinking used by real scientists.

- We identified and characterized student reasoning during inquiry-based experimentation, called Parameter Space Reasoning (PSR). PSR is associated with planning experiments, operationalizing a set of parameters, navigating the parameter space through multiple experimental runs, identifying patterns in parameter space plots, and reflecting on sources of error. After using InquirySpace, students increased their understanding of PSR and its application to near and medium transfer contexts, but not to far transfer.View Paper

- We found seven distinct patterns of learning in the Ramp Game. One pattern showed students not improving in scores and another pattern displayed students’ quick mastery while five patterns showed students’ struggles followed by successful learning with different rates and success.View Paper

- The Bayesian Knowledge Tracing (BKT) model is a popular model used for tracking student progress in learning systems such as an intelligent tutoring system. We analyzed the mathematical structure of the BKT model and constructed a Monte Carlo BKT algorithm to analyze individual students’ knowledge growth during the ramp game developed as part of the IS project. We found that the Monte Carlo BKT analysis can detect the student’s knowledge growth during game play.View Paper

- We developed a metric based on the sample entropy concept for measuring the systematicity of students’ experimentation patterns in an open-ended simulation environment where a number of parameters are at students’ disposal to explore. Unlike other indicators of systematicity proposed in the literature, the sample entropy metric provides a continuous scale and draws upon the up-to-date computational algorithm applied to dynamic processes involved in physical and biological systems. This sample entropy-based metric correlates significantly with student learning outcomes related to (1) how well students described the nature of relationship explored during their experimentation and (2) whether students coordinated between claim and data collected from their experimentation.View Paper

- Digital games can provide an opportunity for players to learn new scientific knowledge. Players’ transactions with digital games and related game performances can be automatically collected in time-stamped log files. In this study, we collected data from log files generated by high school students playing a serious game on a computer and analyzed game score patterns based on Monte-Carlo Bayesian Knowledge Tracing. We found a statistically significant, positive scaffolding effect on knowledge gain when students used a graphing tool as compared to when they did not.View Paper

Videos

View all these videos and more on the Concord Consortium YouTube Channel.

Publications

- Haavind, S. (2024). A roadmap for virtual professional learning: Bringing inquiry science practices to life through teacher professional community. School Science and Mathematics. https://doi.org/10.1111/ssm.12646

- Stephens, A. L. (2024). From graphs as task to graphs as tool. Journal of Research in Science Teaching, 61(5), 1206–1233. https://doi.org/10.1002/tea.21930

- Clair, N. S., Stephens, A. L., & Lee, H.-S. (2023). ‘but, is it supposed to be a straight line?’ scaffolding students’ experiences with pressure sensors and material resistance in a high school biology classroom. International Journal of Science Education. https://doi.org/10.1080/09500693.2023.2260064

- Lee, H.-S., Gweon, G.-H., Webb, A., Damelin, D., & Dorsey, C. (2023). Measuring epistemic knowledge development related to scientific experimentation practice: A construct modeling approach. Science Education. https://doi.org/10.1002/sce.21836

- The Concord Consortium (2022). Teacher innovator interview: Julia wilson. @Concord, 26(1), 15.

- Farmer, T. (2020). Independent experimentation for the remote classroom. @Concord, 24(2), 12–13.

- Gweon, G.-H., & Lee, H.-S. (2020). Tracking students' data collection from a simulation model: Teacher framing and student variations. Annual Meeting of the National Association for Research in Science Teaching (NARST), Portland, OR. (Conference canceled).

- Haavind, S., & Murtha, M. (2020). Accessible physics for all: Providing equity of access for high school physics with extended experimentation and data analysis. The Science Teacher, 87(9), 54-58.

- Stephens, A. L., Clair, N. S., Ediss, B., & Lee, H.-S. (2020). Scaffolding a lesson with noisy data: One physics lesson, two teacher approaches. International Conference of the Learning Sciences (ICLS), Nashville, TN. (Conference canceled).

- The Concord Consortium (2020). Innovator interview: Sarah Haavind. @Concord, 24(1), 15.

- Stephens, A. L., Clair, N. S., Ediss, B., Farmer, T., & Damelin, D. (2020). Small group reasoning about unexpected sensor readings when scaffolded (or not): One physics lesson, four teachers. Annual Meeting of the National Association for Research in Science Teaching (NARST), Portland, OR. (Conference canceled).

- Stephens, L., & Clair, N. S. (2019). Supporting student agency in scientific investigations: Leveraging uncertainty. Paper presented at the Annual Meeting of the National Association for Research in Science Teaching (NARST), Baltimore, MD.

- Gweon, G.-H., & Lee, H.-S. (2019). Automatic detection of control of variables strategy in open-ended scientific experimentation. Paper presented at the Annual Meeting of the National Association for Research in Science Teaching (NARST), Baltimore, MD.

- Damelin, D., Lee, H.-S., & Stephens, L. (2018). The inquiryspace model of scientific experimentation. @Concord, 22(2), 4-6.

- The Concord Consortium (2018). Innovator interview: Hee-Sun Lee. @Concord, 22(1), 15.

- Farmer, T. (2017). Monday's lesson: Exploring data with the ramp game. @Concord, 21(1), 7.

- Stephens, L., & Pallant, A. (2016). Inquiry space: Using graphs as a tool to understand experiments. Paper presented at the 2016 Annual Meeting of the American Educational Research Association (AERA), Washington, DC.

- Lee, H.-S., Gweon, G.-H., Dorsey, C., Tinker, R., Finzer, W., Damelin, D., Kimball, N., Pallant, A., & Lord, T. (2015). How does bayesian knowledge tracing model student development of knowledge about a simple physical system?. Proceedings in Learning Analytics & Knowledge Conference 2015, Poughkeepsie, NY.

- Gweon, G.-H., Lee, H.-S., Dorsey, C., Tinker, R., Finzer, W., & Damelin, D. (2015). Tracking student progress in a game-like learning environment with a monte carlo bayesian knowledge tracing model. Paper presented at the annual meeting of American Physical Society, San Antonio, TX.

- Gweon, G.-H., Lee, H.-S., Dorsey, C., Tinker, R., Finzer, W., & Damelin, D. (2015). Tracking student progress in a game-like learning environment with a constrained bayesian knowledge tracing model. Proceedings in Learning Analytics & Knowledge Conference 2015, Poughkeepsie, NY.

- Tinker, R. (2015). Inquiryspace: A place for doing science. Concord, MA: The Concord Consortium.

- Pallant, A., Lee, H.-S., & Kimball, N. (2015). Analytics and student learning: An example from inquiryspace. @Concord, 19(1), 8-9.

- Finzer, W., & Tinker, R. (2015). Under the hood: Embedding a simulation in CODAP. @Concord, 19(1), 14.

- Finzer, W. (2014). Hierarchical data visualization as a tool for developing student understanding of variation of data generated in simulations. in proceedings of the ninth international conference on teaching statistics (vol. 6). voorburg: International statistics institute.

- Hazzard, E. (2014). A new take on student lab reports. The Science Teacher.

- Tinker, R., & Hazzard, E. (2012). Inquiryspace: A space for real science. @Concord. 16(2) 8-9.

Blog Posts

Learn more about the InquirySpace project at the Concord Consortium blog.

- For Equity and Justice, Start Where Students Are and Help Them Find Answers

- CODAP videos help teachers and students with NGSS practice of Analyzing and Interpreting Data

- The Science Teacher: Accessible Physics for All

Activities

View, launch, and assign activities developed by this project at the STEM Resource Finder.