The InquirySpace Model of Scientific Experimentation

Laboratory investigations are a mainstay of the science classroom, and have historically been the pathway for students to “experience science.” The importance of engaging in science and engineering practices is one of the three dimensions of A Framework for K-12 Science Education and the Next Generation Science Standards (NGSS). A naive reading of these practices might leave one thinking that we already do these things.

However, traditional labs typically engage students in only a subset of the practices, such as analyzing and interpreting data, and obtaining, evaluating, and communicating information. One of the key shifts in the NGSS is toward student agency in their scientific explorations. This is exemplified in other practices, such as asking questions, developing and using models, planning and carrying out investigations, and engaging in argument from evidence. Students should be learning science by doing real science, and to do that they need to struggle with the ideas of scientific experimentation, data analysis, and iterative refinement of experiments.

Our National Science Foundation-funded InquirySpace project is developing and researching students’ ability to engage in open-ended science investigations of their own design. We are researching what students require to be empowered as learners and what conditions are necessary to foster their ability to conduct robust, data-rich investigations. We are beginning to understand how authentic scientific investigation comes about in the classroom. And we are uncovering some surprising insights.

Doing labs “correctly”

Traditionally, managing the complexity of doing science has been accomplished by providing students with clear instructions and a narrowly scoped, predefined experiment. However, if we’re expecting learners to grow, we need to begin by giving them opportunities to exercise independence, take responsibility, and make corrections, right from the start. Giving students agency is central to fostering independent investigation—it may even be central to understanding science itself.

Current laboratory scenarios rarely begin with this premise. Investigating phenomena is complicated and an hour of classroom time is precious. Consequently, the focus becomes ensuring that the lab runs smoothly, which means students follow a pre-set step-by-step procedure, collect data as prescribed, and don’t waste time.

But consider what learners take away from this overly scripted exercise. First, they may envision laboratory procedures as something to be followed rather than forged. The idea of designing and refining an investigation, and gaining ownership of the science practices involved, vanishes, or at best is sidelined. Second, students may implicitly learn to consider data as a product rather than a process. Students often try to create a data table with the “right answers” in order to reveal a result they—and the teacher—know is predetermined. In these situations, uncertainty or variability in data is something to avoid and is interpreted as the outcome of incorrect execution (i.e., a “wrong answer”). The concept of unique discovery never really enters the discussion.

The elements of agency

Scaffolding students toward independence means empowering them with agency in three critical aspects of scientific investigation: 1) designing experimental scenarios that include collecting, inspecting, cleaning, and analyzing data, 2) addressing sources of uncertainty and variability associated with the limitations and constraints of data collection, analysis, and interpretation, and 3) engaging in argument from evidence, developing scientific explanations, and communicating those ideas.

A student preparing for a laboratory investigation has a mental model of scientific experimentation. The model may include: ideas about how data are gathered, strategies for designing experiments, what is involved in collecting data, the role lab apparatus play in investigating and answering a question, and much more. The student also has a mental model of the scientific phenomenon she’s preparing to investigate. This model might include ideas about how electricity works, what bacteria need to grow, what mechanism is at the heart of chemical reactions—or a thousand other concepts related to the phenomenon at hand.

In order to help students develop agency in the context of scientific experimentation, we need to recognize that both models are at work when exploring phenomena. Her mental model of the phenomenon influences what she decides to observe and what questions to ask. On the other hand, her mental model of experimentation influences the design of the experiment, including what questions she considers investigable, the structure of her data collection, and what equipment she selects as appropriate to answer those questions. What’s critical to recognize is that refining and building students’ competence at independent investigation involves a continuous interaction between the two models.

There’s another factor as well. Scientific investigations aren’t thought experiments. They use real materials, in the real world. A balance has friction between its parts. A microscope is limited by focus and optical resolution. Scientific phenomena are complex and indistinct. Objects being measured—and measuring devices themselves—have finite dimensions. The reality of experimentation is that we must use the materials of the real world to investigate the world’s phenomena—and that these materials always resist our efforts in some way or another. In our attempt to keep things simple for students, we may be tempted to minimize the resistance an experiment’s materials introduce, but some measure of resistance is always unavoidable.

Taking all this into account, the process of investigation becomes an interplay of three elements: the student’s model of scientific experimentation, her model of the phenomenon, and the material resistance* of the world itself (Figure 1). By leveraging and honing her two mental models, she is able to design increasingly focused investigations. She then collects data for analysis, which results in a scientific explanation that demonstrates her understanding of the phenomenon. Initial stages of investigation involve cycles of designing, collecting, analyzing, and explaining based on feedback from the material world. This leads to more formalized and deliberate cycles as she refines her understanding and process.

The InquirySpace approach

Our goal is to support students in learning science by doing science. The core of this approach involves students having the skills and the charge to investigate the world around them. Rather than following a recipe such as those found in classical labs, students should be asking questions like: What can I measure and observe? How can I design an experiment to collect data? Once I have data, how certain am I of the patterns or relationships those data suggest? Do I need to collect more data? Do I need to redesign my experiment? Such questions are at the center of the changes in classroom laboratory experimentation we wish to bring about.

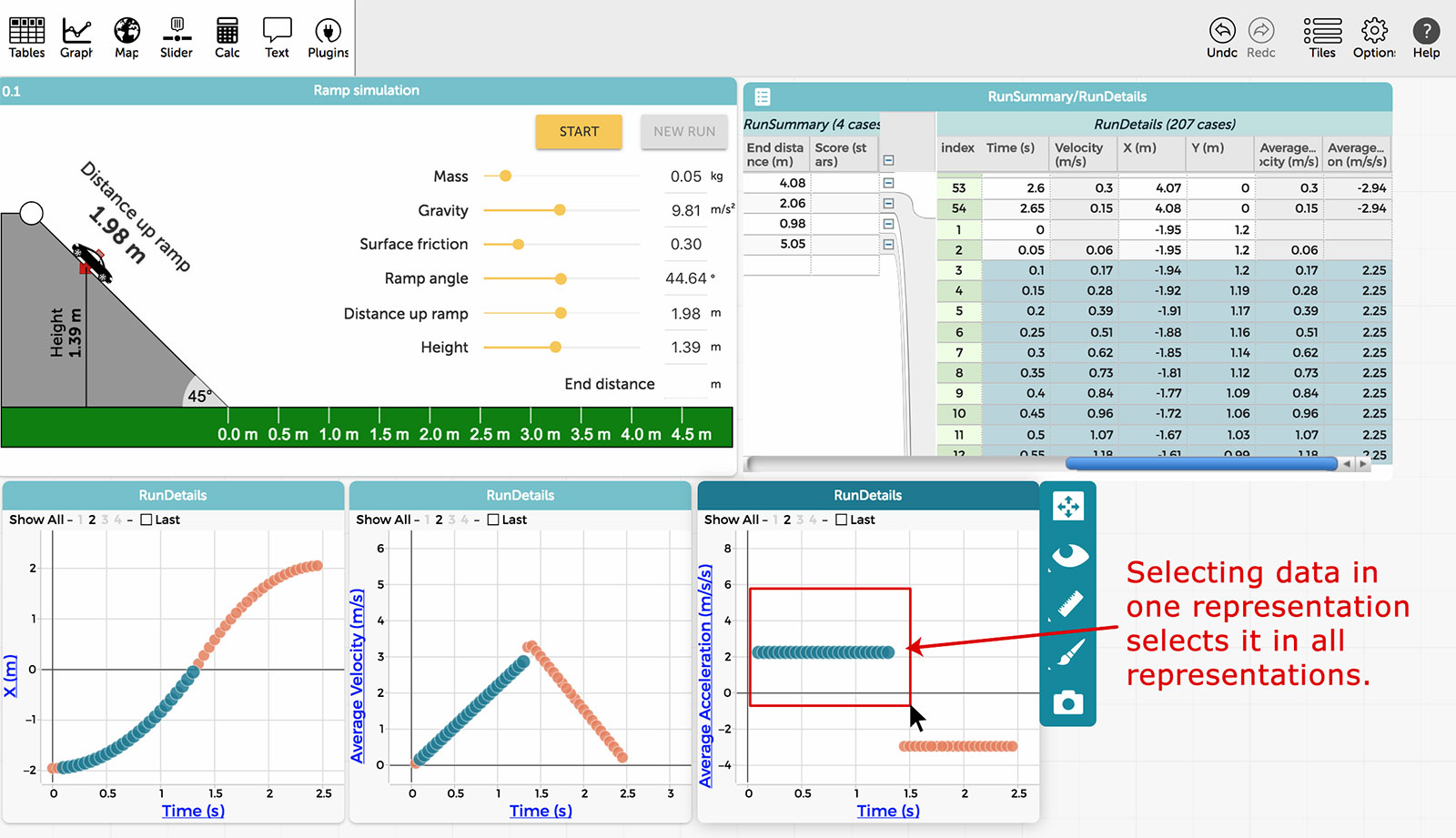

Since 2012, InquirySpace has been developing, classroom-testing, and revising software to facilitate student-led inquiry, as well as curricular supports to scaffold students in building the skills and mental models of experimentation necessary to engage in scientific inquiry. The software development includes expansion and refinement of our Common Online Data Analysis Platform (CODAP), an easy-to-use web-based tool, and plugins for CODAP that allow direct data collection via sensors and data generation by simulations. CODAP has been uniquely designed for students to engage in sense-making from data, utilizing a simple drag-and-drop interface for organizing data into hierarchical structures, generating visualizations of the data, and using graphs not just as a means for displaying data, but as a way to filter and explore data across linked representations (Figure 2). Students can visualize and analyze data as they are collected in real time and make adjustments to refine their experiments, e.g., constructing the physical apparatus to collect data, selecting and using measuring devices properly, considering how much data to collect, and identifying and minimizing error sources.

We are developing on-ramps to help students build knowledge over time not only in the technical skills of scientific investigation, but also in the conceptual skills needed to undertake student-led and designed experiments. They begin with significant scaffolding that fades over time, culminating in the exploration of some phenomenon of the student’s choosing related to the relevant scientific domain of physics, chemistry, or biology.

Such independently designed student experiments differ from the traditional lab in several ways. Students begin by “messing around,” exploring what is possible and how the physical limitations of their measuring devices and experimental materials might influence the questions they can ask and answer. While this may seem a bit chaotic at first, it is a necessary step for students to gain agency in engaging with science practices, and informs their impending work as they home in on a more formalized procedure of their own design. When students no longer follow lab instructions blindly, they become actively engaged with phenomena, working to extract their secrets and taking the first steps in seeing the world through the lens of science.

* Pickering, A. (2010). The mangle of practice: Time, agency, and science. Chicago: University of Chicago Press.

Dan Damelin (ddamelin@concord.org) is a senior scientist.

Hee-Sun Lee (hlee@concord.org) is a senior research scientist.

Lynn Stephens (lstephens@concord.org) is a research scientist.

This material is based upon work supported by the National Science Foundation under grants IIS-1147621 and DRL-1621301. Any opinions, findings, and conclusions or recommendations expressed in this material are those of the author(s) and do not necessarily reflect the views of the National Science Foundation.