High-Adventure Science

Importance

Many students tune out of science not because they cannot master it, but because they don’t see why science is relevant to their lives. The emphasis on covering content also gives students the misconception that science is about what is known. They get no exposure to how science progresses, what is unknown, and what motivates scientists. Scientists get excited about what they don’t know yet and regard these unsolved mysteries as challenges. Can we generate similar curiosity and enthusiasm among students? Can we encourage students to analyze data and models to seek answers? Can we get them to think like scientists?

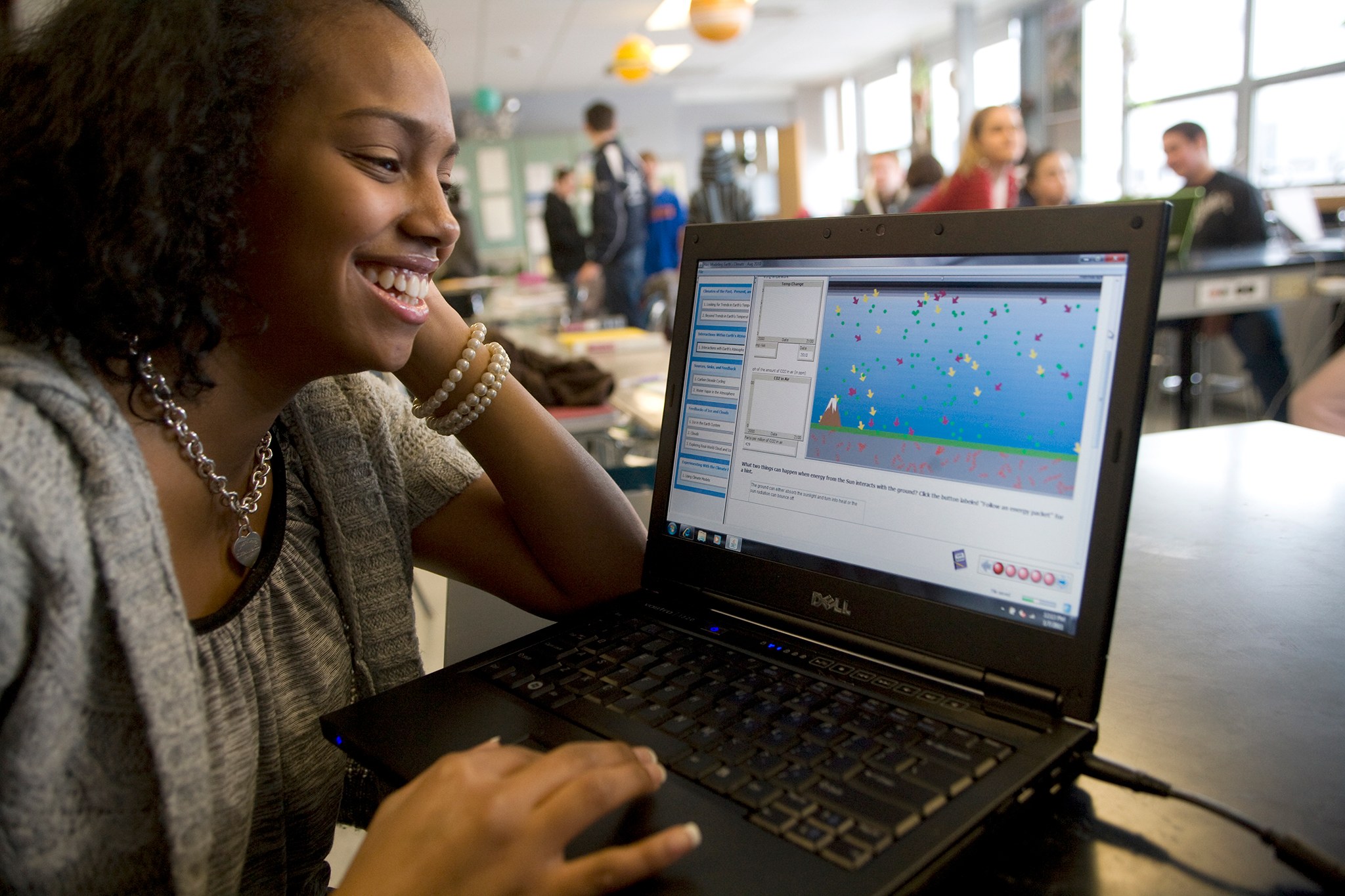

We’re bringing the excitement of scientific discovery to students by letting them explore pressing unanswered questions using the same methods that practicing scientists use. The goal of our research is to determine whether the active exploration of these questions helps students come to view science as a dynamic, evolving process.

With our partners at National Geographic Education, we’ve developed computer-based investigations around compelling unanswered questions in Earth and space science to help students learn science like scientists. Six curriculum units have been developed, guided by the following design principles: 1) engage students in real-world frontier science; 2) have students interpret data collected from scientists to make sense of scientific phenomena they are investigating; 3) engage students in experiments with dynamic computer-based Earth systems models; and 4) support scientific argumentation using evidence from data and models and support student attribution of uncertainty sources associated with their claims and evidence.

In the words of Lewis Thomas our goal has been to help students “see science as the high adventure it really is, the wildest of all explorations ever taken by human beings….” This project explores a fresh approach to bringing science into the classroom in a way that is true to the spirit of science, uses computer technology, is based on current research in effective online learning, and has been shown to have a measurable impact on science teaching and learning.

Research

We conducted research in the classrooms of 53 field test teachers over 4,500 students.

- Though addressing sources of uncertainty is an important part of doing science, it has largely been neglected in assessing students’ scientific argumentation. We defined a scientific argumentation construct consisting of claim, evidence-based explanation, uncertainty rating, and uncertainty attribution and validated that this task structure can measure students’ ability to engage in uncertainty-infused argumentation.

View Paper - Modeling and argumentation are two important scientific practices students need to develop throughout their education. We investigated how middle and high school students construct a scientific argument based on evidence from computational models. Our results indicate that students used models and model-based graphs as evidence to confirm their claims. Students’ assessment of uncertainty related to the argument reflected more frequently on their evaluation of their personal knowledge or abilities related to the tasks than on their critical examination of scientific evidence resulting from models. Some students were able to elaborate limitations of model-based evidence to make definite scientific claims.

View Paper - Over the last several decades there has been a growing awareness of the ways humans impact Earth systems. As global problems emerge, educating the next generation of citizens to be able to make informed choices related to future outcomes is increasingly important. The challenge is figuring out how to prepare students to think about complex systems and sustainability. We exposed students to interactive computer-based dynamic Earth systems models and embedded prompts to help them focus on stocks and flows within the system. This approach helps students identify important resources in the models, explain the processes that are changing the availability of the stock, and explore real-world examples.

View Paper - How do students articulate uncertainty when they are engaged in structured scientific argumentation tasks? We investigated 302 high school students who completed four uncertainty-infused scientific argumentation tasks related to detecting exoplanets. We found that while the majority of students had difficulties in expressing uncertainty in either explanation or uncertainty attribution, students who did express uncertainty in their explanations did so scientifically without being prompted explicitly. Students’ uncertainty ratings and attributions revealed a mix of their personal confidence and uncertainty related to science. If the exoplanet task presented noisy data, students were less likely to express uncertainty in their explanations.

View Paper

Videos

View all these videos and more on the Concord Consortium YouTube Channel.

Publications

- Olivera-Aguilar, M., Lee, H.-S., Pallant, A., Belur, V., Mulholland, M., & Liu, O. L. (2022). Comparing the effect of contextualized versus generic automated feedback on students’ scientific argumentation (Research Report No. RR-22-03). ETS.

- Lee, H. -S., Gweon, G.-H., Lord, T., Paessel, N., Pallant, A., & Pryputniewicz, S. (2021). Machine learning-enabled automated feedback: Supporting students’ revision of scientific arguments based on data drawn from simulation. Journal of Science Education and Technology, 30, 168-192.

- Pallant, A. (2020). Perspective: Transforming Earth science education with technology. @Concord, 23(3), 2-3.

- Harmon, S., & Pryputniewicz, S. (2020). Can we feed a growing population? Using simulations to engage students. @Concord, 23(3), 12-13.

- Lee, H.-S. (2020). Making uncertainty accessible to science students. @Concord, 23(3), 14-15.

- Pryputniewicz, S., & Pallant A. (2019). Automated scoring helps student’s arguments. @Concord, 23(1) 10-11.

- Pallant, A., Lee, H.-L., & Pryputniewicz, S. (2019). How to support secondary school students’ consideration of uncertainty in scientific argument writing: A case study of a High-Adventure Science curriculum module. Journal of Geoscience Education.

- Lee, H.-S., Pallant, A., Pryputniewicz, S., Lord, T., Mulholland, M., Liu, O. L. (2019). Automated text scoring and real-time adjustable feedback: Supporting revision of scientific arguments involving uncertainty. Science Education, 103(3), 590-622.

- Pei, B., Xing, W., & Lee, H.–S. (2019). Analyzing student-augmented visual artifacts through digital image processing in the context of scientific argumentation. British Journal of Educational Technology.

- Harmon, S., Pallant, A., & Pryputniewicz, S. (2019). Using Scientific Argumentation to Understand Human Impact on the Earth. The Science Teacher, 86(6), 28-36.

- Mutch-Jones, K., Gasca, S., Pallant, A., & Lee, H.-S. (2018). Teaching with interactive computer-based simulation models: Instructional dilemmas and opportunities in the High-Adventure Science project. School Science and Mathematics, 1–11. doi:10.1111/ssm.12278

- Pallant, A., & Lee, H.-S. (2017). Teaching sustainability through systems dynamics: Exploring stocks and flows embedded in dynamic computer models of an agricultural system. Journal of Geoscience Education, 65(2). 146-147.

- Pallant, A. (2017). High-Adventure Science: Exploring evidence, models, and uncertainty related to questions facing scientists today. The Earth Scientist, 33, 23-28.

- Pallant, A., Pryputniewicz, S., & Lee, H-S. (2017). The future of energy. The Science Teacher, 84(3), 61-68.

- Lee, H.-S., Pallant, A., Lord, T., & Liu, O. L. (2017) Articulating uncertainty attribution as part of critical epistemic practice of scientific argumentation. In Smith, B. K., Borge, M., Mercier, E., and Lim, K. Y. (Eds.). (2017). Making a difference: Prioritizing equity and access in CSCL, 12th International Conference on Computer Supported Collaborative Learning (CSCL) 2017, Volume 2. (pp.135-142). Philadelphia, PA: International Society of the Learning Sciences.

- Zhu, M., Lee, H.-S., Wang, T., Liu, O. L., Belur, V., & Pallant, A. (2017). Investigating the impact of automated feedback on students’ scientific argumentation. International Journal of Science Education, 1–21.

- Harmon, Stephanie (2017). Developing a scientific argument: Putting the practice into practice. Kentucky Science Assessment System Newsletter, 21(2), 12-13.

- Wright, K., Pallant, A., & Lee, H.-S. (2017). Appropriating a climate science discourse about uncertainty in online lessons. In Smith, B. K., Borge, M., Mercier, E., and Lim, K. Y. (Eds.). Making a difference: Prioritizing equity and access in CSCL, 12th International Conference on Computer Supported Collaborative Learning (CSCL) 2017, Volume 2. (pp.577-580). Philadelphia, PA: International Society of the Learning Sciences.

- Lord, T., & Pallant, A. (2016). Can a Robot Help Students Write Better Scientific Arguments? @Concord, 20(1) 8-9.

- Xing. W., Lee, H.-S., & Pallant, A. (2016). Text mining in written scientific argumentation using Latent Dirichlet Allocation. Paper presented at the Annual Meeting of the American Educational Research Association. Washington, D.C.

- Pallant, A. (2015) High-Adventure Science. Teachers Clearinghouse for Science and Society Education Newsletter, XXXlV(1) 7.

- Pallant, A., & Pryputniewicz, S. J. (2015). Great questions make for great science education. @Concord, 19(1), 4-6.

- Pallant, A., & Lee H.-S. (2015). Constructing scientific arguments using evidence from dynamic computational climate models. Journal of Science Education and Technology, 24(2-3), 378-395.

- Lee, H.-S., Liu, O. L., Pallant, A., Roohr, K. C., Pryputniewicz, S., & Buck, Z. (2014). Assessment of uncertainty-infused scientific argumentation. The Journal of Research in Science Teaching, 51(5), 581-605.

- Buck, Z., Lee, H.-S., & Flores, J. (2014). I’m sure there may be a planet: Student articulation of uncertainty in argumentation tasks. International Journal of Science Education, 36(4), 2391-2420.

- Pallant, A. (2014). Monday’s lesson: Modeling an agricultural system. @Concord, 18(1), 7.

- Pallant, A. (2013). Encouraging students to think critically about Earth’s systems and sustainability. The Earth Scientist, 29(4), 13-17.

- Pallant, A. (2013, May). No simple answers—How models and data reveal the science behind environmental topics. The MEES Observer.

- Pallant, A. (2013). The future of fracking: Exploring human energy use. @Concord, 17(1), 10-11.

- Pallant, A., Damelin, D., & Pryputniewicz, S. (2013). Deep space detectives. The Science Teacher, 80(2), 55-60.

- Pallant, A., Lee, H.-S., & Pryputniewicz, S. (2012). Modeling Earth’s climate. The Science Teacher, 79(7), 31-36.

- Pallant, A., Lee, H.-S., & Pryputniewicz, S. (2012). Exploring the unknown. The Science Teacher, 79(3), 60-65.

- Pallant, A. (2011). Looking at the evidence: What we know. How certain are we? @Concord, 15(1), 4-6.

- Pallant, A. (2010). Modeling the unknown is high adventure science. @Concord, 14(1), 6-7.

Conference Publications

- Guo, Y., Xing, W., & Lee, H.-S. (2016). Identifying students’ mechanistic explanations in textual responses to science questions with association rule mining. Paper presented at the Annual Meeting of the American Educational Research Association. Washington, D.C.

- Guo, Y., Xing, W., & Lee, H.-S. (2015). Identifying students’ mechanistic explanations in textual responses to science questions with association rule mining. Paper presented at the workshop for Data Mining for Educational Assessment and Feedback (ASSESS 2015) during International Conference on Data Mining 2015, Atlantic City, NJ.

- Pallant, A., Lee, H.-S., & Pryputniewicz, S. (2013, April). Promoting Scientific Argumentation with Computational Models. Paper presented at the Annual Meeting of the National Association for Research in Science Teaching (NARST), Puerto Rico.

- Lee, H.-S., Pallant, A., Pryputniewicz, S., & Liu, O. L., (2013, April). Measuring Students’ Scientific Argumentation Associated with Uncertain Current Science. Paper presented at the Annual Meeting of the National Association for Research in Science Teaching (NARST), Puerto Rico.

- Pallant, A., & Lee, H.-S., (2011, April). Characterizing uncertainty with middle school students’ scientific arguments. Paper presented at the Annual Meeting of the National Association for Research in Science Teaching (NARST), Orlando, FL.

Blog Posts

Learn more about the High-Adventure Science project at the Concord Consortium blog.

- New Earth Science Website Hub in Collaboration with National Geographic Society

- Student-friendly video on scientific argumentation

- Teaching Earth and environmental science remotely

Activities

View, launch, and assign activities developed by this project at the STEM Resource Finder.