GRASP

Importance

How can gesture aid in students’ ability to construct explanations of scientific phenomena, particularly ones that have unseen structures and unobservable mechanisms such as molecular interactions? With the University of Illinois, Urbana-Champaign we’re designing and researching gesture-controlled computer simulations using motion sensing input technologies.

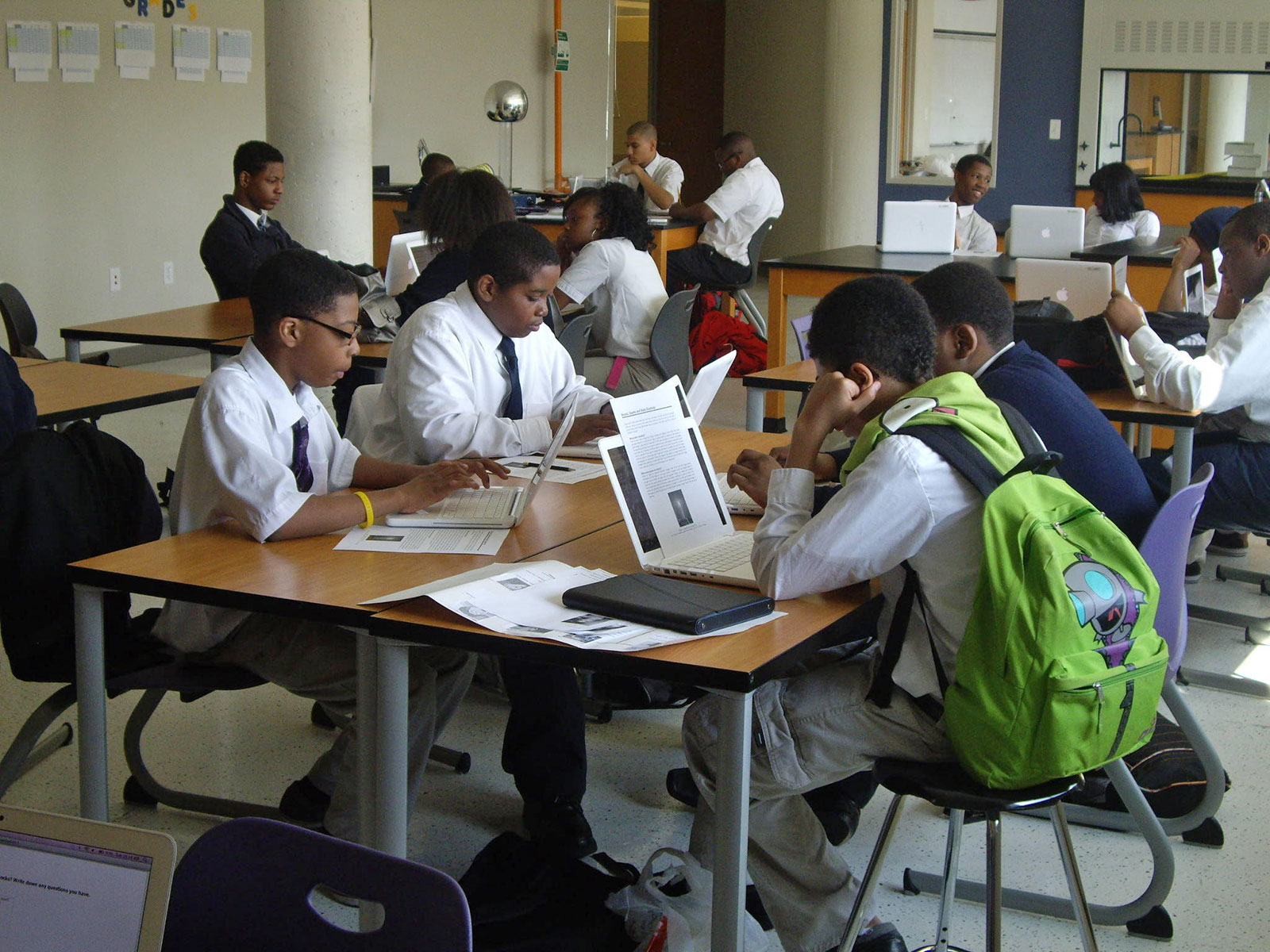

GRASP (Gesture Augmented Simulations for Supporting Explanations) studies the role that motions of the body play in forming explanations of scientific phenomena. We’re conducting empirical studies with middle school students, gathering data to apply to enhancing educational tools. We’re designing and testing the addition of gesture control to computer simulations using motion-sensing input devices to see if these digital technologies can guide learners to perform physical actions that can help them comprehend, recall, and retain.

This work brings together the emerging fields of embodied cognition and human-computer interaction (HCI). Embodied cognition research has provided evidence that human reasoning is deeply rooted in the body’s interactions with the physical world. Some researchers believe that it is impossible to separate the nature of thinking from the bodies we inhabit, hence learning and understanding are shaped by the actions of our bodies. There is still much to be understood about the kinds of movements that support the development of specific ideas, particularly unobservable mechanisms, which is the focus of the GRASP project.

Inexpensive devices make the control and navigation of a computer possible using body motion. This technology is now affordable and commercially available. Microsoft’s Kinect and the Leap Motion system are two leading examples. Educational researchers have taken note of these technology developments and the potential for new embodied interaction techniques that facilitate student learning. We hope to identify embodied learning opportunities that can be created with software freely available on the Web and with relatively inexpensive interface devices, making it accessible to a broad audience of learners.

Research

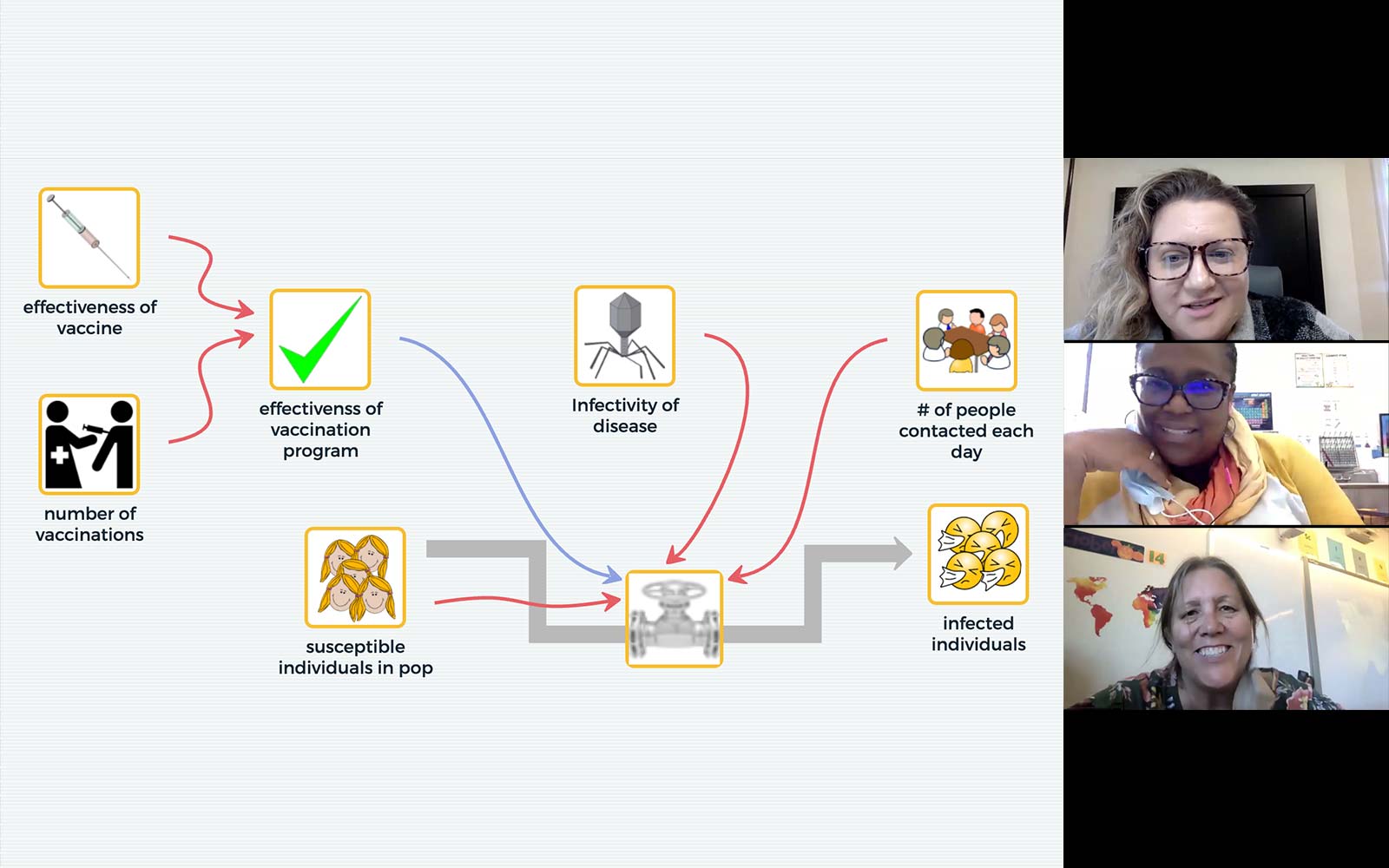

We seek to identify types of body motion that support causal explanations of observable phenomena. Central to this study are body motions that promote causal explanations, called embodied explanatory expressions (EEEs). We’re researching:

- Characteristics of embodied expressions that support scientific reasoning at the descriptive level (observations of visible phenomena) and at the explanatory level (causal accounts that include hidden structures and unseen mechanisms).

- EEEs that facilitate reasoning about three critical science topics: molecular interactions, heat transfer, and Earth systems.

- How online simulation environments can effectively integrate EEEs into their interface design.

Publications

- The Concord Consortium (2017). Experimenting with extended reality in our innovation lab. @Concord, 21(2), 7.

- Kimball, N. (2016). Grasping invisible concepts. @Concord, 20(1), 4-6.

- Hart, C. (2016). Under the hood: Hands-on interactive activities with leap motion. @Concord, 20(2), 14.